This is a big deal for me. For my entire professional life, I've been living with a Microsoft operating system on a daily basis. Starting in DOS 5 back in 1993, then moving to Windows (I've been a power user in all these versions - 3.1, 3.11, 95, NT 4, 2000, XP). Now, though, I'm conducting both my personal and professional lives in OS X. And I'm giddy with joy. I only occasionally need to dip into Windows for 1 of the 2 applications for which I don't have a superior Mac replacement.

Dealing with low-level frustration and annoyance takes a measurable toll on your psyche. I'm not one to be overly religious about tools; I try to learn to use them to their utmost. However, I absolutely believe that my quality of life is better now, in small but subtle ways, mostly having to do with elegance and design. These "OS X rocks, Windows sucks ass" kind of blog entries are generally short on substance, just an inarticulate expression of the intangible. Well, here are some concrete examples.

Windows machines have 2 ways to connect to networks, wired and wireless. On my Dell Latitude 610, when a wireless network is near, it pops up a Windows task tray balloon notifying you that it would like to connect. Yet, when you connect to a wired network, you no longer have a need for the wireless one. Windows still pops up the annoying little balloon, about every 15 seconds, offering to connect you to a network you don't need. When you connect OS X to a wired network, it stops asking you about connecting to a wireless network because it figures out, correctly, that your networking needs are now met.

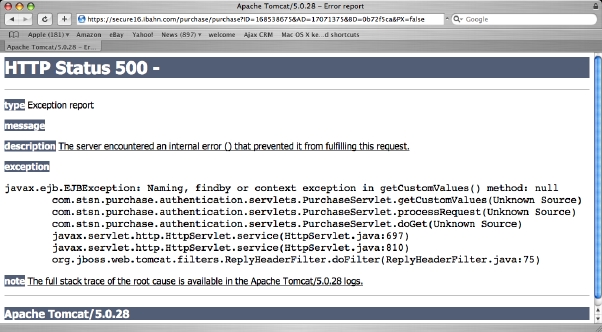

Another example: power users like to be able to get to the underbelly of all the GUI eye candy to get real work done. I would like access to the Excel command line, in the vain hope that I might be able to open multiple spreadsheets at a time. Yet, in their infinite wisdom, Microsoft has wired Windows to treat Office shortcuts differently, preventing you from getting to the underlying startup command. If you don't believe me, check out this screen shot or check for yourself.

I've done what all power users of Windows ends up doing: I wrote a Ruby script that uses COM automation to open multiple spreadsheets. In fact, my toolbox is full of little scripts and such that get around annoying Windows behavior. Actually, I should be grateful to Microsoft for their annoyances: much of the Productive Programmer book features ways to make programmers more productive in that environment.

Before I get a whole bunch of Spolsky-esque comments about why Windows is the way it is, let me state that I already understand. I know that it's terribly difficult to write an OS that handles all the wide-world of devices that Windows must support because it runs on so much hardware. And, I know that one of Apple's big advantages is their tight coupling of hardware and software. I don't believe that Microsoft is evil or incompetent, and I in fact like some of what they create: .NET has some really nice, elegant parts (and some warts too, like all technologies). But, at the end of the day, as a user of the OS, the little things matter to me. If you cast aside history for the moment, using OS X is much more pleasant and refreshing, regardless of the reasons that got us here.

.

.